Node.js + LLM in Production: Ollama and AI APIs Without Dependencies

From ChatGPT prototypes to production systems with total control: how a startup can save 90% on AI costs by running local models

The Problem Nobody Wants to Admit

Your startup builds a product with the OpenAI API. It works. The first clients love it. But in Q3 you face reality:

- The OpenAI bill hit €8,000/month (it was €200 6 months ago).

- An enterprise client asks: "Where does our data live?" → You can't answer.

- Latency doubled because you are making 5 calls to OpenAI per request.

- Someone on the team says: "Can't we use Llama locally?"

And there begins the reality: prototyping with proprietary APIs is easy. Moving to production without them is where developers disappear for a week.

In 2026, that week is not necessary.

NodeLLM, Ollama, and the JavaScript ecosystem for LLMs have matured enough for a 3-person startup to deploy a robust AI system without touching Python, without using luxury GPUs, and without paying €8,000/month to OpenAI.

This guide is for developers who want to build AI APIs that work in production, with code they can maintain, without every feature request meaning a call to OpenAI.

Why Node.js For LLMs?

The question is legitimate. Shouldn't this be done in Python?

Short answer: Yes, but not always.

Long answer:

JavaScript dominates the modern backend in startups. If your architecture is already Node.js (Express, NestJS, Fastify), adding AI locally means:

- A single codebase.

- Same language, same mindset.

- Unified testing and deployment.

- No complex Python ↔️ Node bridges.

Furthermore, in 2026, Node.js libraries for LLMs are production-ready:

- NodeLLM (backend-first, multi-provider)

- LangChain.js (chains and agents)

- Ollama Node SDK (local models)

- node-llama-cpp (C++ bindings for lightweight models)

The 2026 Stack: From Prototypes to Production

Level 1: Rapid Prototypes (Week 1)

npm install openai # or anthropic, or cohere- Use case: Demos, MVPs, pilots.

- Cost: Pay-per-token API. No overhead.

- Problem: No control. If OpenAI goes down, your app goes down. If the bill explodes, you explode.

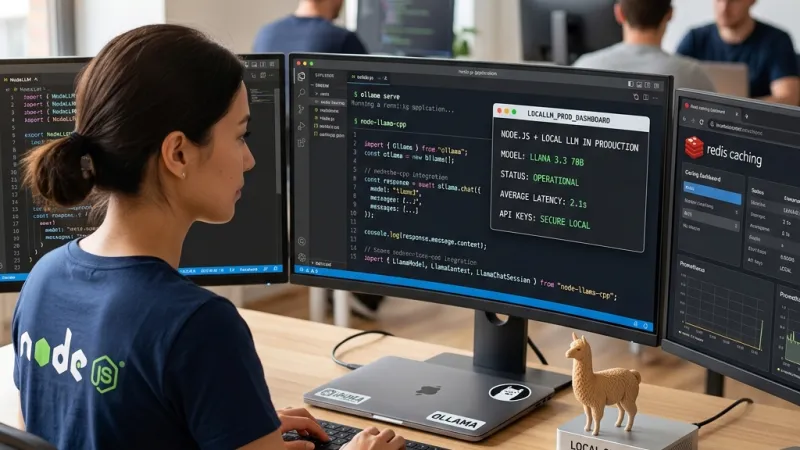

Level 2: Self-Hosted Local (Week 2-3)

Tools: Ollama (local server, optional UI), Ollama Node SDK or node-llama-cpp (connection from Node), Express (your API).

- Use case: SaaS with guaranteed privacy, offline-first, total cost control.

- Cost: Zero (besides hardware). An M2 Macbook runs Llama 3.3-70B decently.

- Problem: Latency and VRAM if the model is large.

Level 3: Hybrid + Observability (Month 2)

Stack: NodeLLM (multi-provider orchestration), Vector DB (Pinecone, Weaviate, or local with Qdrant), Redis (embeddings caching), Prometheus + Grafana (monitoring).

- Use case: Enterprise production. Scalable, observable, automatic fallback.

- Cost: Optimized. Proprietary APIs only when necessary. Local when possible.

Real Implementation: Chatbot in 2 Hours

Option 1: Local + Ollama (No Money)

Step 1: Install Ollama

# macOS/Linux: download from ollama.ai

# Windows: WSL2 + Ollama

# Download a model

ollama pull llama2

ollama pull mistralStep 2: Node.js Server

// server.js

const express = require('express');

const { Ollama } = require('ollama');

const app = express();

app.use(express.json());

const ollama = new Ollama({ base_url: 'http://localhost:11434' });

app.post('/api/chat', async (req, res) => {

const { message } = req.body;

try {

const response = await ollama.generate({

model: 'mistral', // change according to your model

prompt: message,

stream: false,

});

res.json({ reply: response.response });

} catch (error) {

res.status(500).json({ error: error.message });

}

});

app.listen(3000, () => console.log('API running on port 3000'));Step 3: Test

curl -X POST http://localhost:3000/api/chat \

-H "Content-Type: application/json" \

-d '{"message":"What is AI?"}'Cost: €0. Time: 30 minutes.

Limitation: Llama responds slowly (5-15s depending on the model and GPU).

Option 2: NodeLLM + OpenAI (Control + APIs)

// server.js (using NodeLLM)

const { NodeLLM } = require('@node-llm/core');

const llm = NodeLLM.chat('gpt-4o'); // zero-config: reads API key from ENV

app.post('/api/chat', async (req, res) => {

const { message } = req.body;

try {

const response = await llm.ask(message);

res.json({ reply: response.content });

} catch (error) {

res.status(500).json({ error: error.message });

}

});Advantage: The exact same code works with OpenAI, Anthropic, Gemini, local Ollama...

// Change the provider in one line

const llm = NodeLLM.chat('claude-3.5-sonnet'); // Now it's Anthropic

const llm = NodeLLM.chat('mistral-large'); // Or MistralCost: Pay-per-token with APIs. Total model control.

Option 3: Streaming (Real-Time Responses)

The client sees the text generated character by character (like ChatGPT).

app.post('/api/chat-stream', async (req, res) => {

const { message } = req.body;

res.setHeader('Content-Type', 'text/event-stream');

res.setHeader('Cache-Control', 'no-cache');

res.setHeader('Connection', 'keep-alive');

try {

for await (const chunk of llm.stream(message)) {

res.write(`data: ${JSON.stringify({ content: chunk.content })}\n\n`);

}

res.write('data: [DONE]\n\n');

res.end();

} catch (error) {

res.write(`data: ${JSON.stringify({ error: error.message })}\n\n`);

res.end();

}

});Frontend (Vanilla JavaScript):

const eventSource = new EventSource('/api/chat-stream');

let text = '';

eventSource.onmessage = (event) => {

const data = JSON.parse(event.data);

if (data.content) {

text += data.content;

document.getElementById('response').textContent = text;

}

};

eventSource.onerror = () => {

eventSource.close();

};Cost: Same cost. UX: 10x better.

Real Costs: Local vs OpenAI API

Case: SaaS with 1,000 active users/month

Assumption: 5 calls average per user, 500 tokens/call.

Option 1: OpenAI API (GPT-4)

- Input cost: $0.03 / 1K tokens

- Output cost: $0.06 / 1K tokens

- Total tokens: 1,000 users × 5 × 500 = 2.5M tokens

- Bill: ~€200-250/month

Option 2: Ollama + M2 Mac

- Hardware: €1,500 (one-time, amortized)

- Electricity: ~€5/month

- Development: 40 hours (one-time)

- Recurring bill: €5/month

Option 3: Hybrid (Smart Routing)

// If the question is simple → Local Mistral (free)

// If it requires complex reasoning → GPT-4 (paid)

const selectModel = async (message) => {

const complexity = await estimateComplexity(message); // simple heuristic

if (complexity < 3) {

return 'mistral-local'; // < 1ms latency, 0 cost

} else {

return 'gpt-4o'; // 200ms latency, $0.003 cost

}

};Result:

- 70% queries → Mistral (free)

- 30% queries → GPT-4 (paid)

- Average bill: ~€25/month

- Savings: 90% vs standalone OpenAI

Production Patterns

1. Response Caching

const redis = require('redis');

const client = redis.createClient();

app.post('/api/chat', async (req, res) => {

const { message } = req.body;

const hash = crypto.createHash('sha256').update(message).digest('hex');

// Try to read from cache

const cached = await client.get(`chat:${hash}`);

if (cached) {

return res.json(JSON.parse(cached));

}

// If not in cache, generate response

const response = await llm.ask(message);

// Save to cache for 24 hours

await client.setex(`chat:${hash}`, 86400, JSON.stringify(response));

res.json(response);

});Impact: Eliminates 60-70% of latency for recurring questions.

2. Rate Limiting

const rateLimit = require('express-rate-limit');

const limiter = rateLimit({

windowMs: 60 * 1000, // 1 minute

max: 10, // 10 requests/minute per IP

message: 'Too many requests, please try again later',

});

app.post('/api/chat', limiter, async (req, res) => {

// ... your logic

});Protects against: Spam, abuse, explosive costs with APIs.

3. Model Versioning

const MODELS = {

'v1': 'gpt-4o', // 2026-01

'v2': 'gpt-4.5', // 2026-03

'experimental': 'o1', // New

};

app.post('/api/chat', async (req, res) => {

const { message, version = 'v1' } = req.body;

const model = MODELS[version] || MODELS['v1'];

const response = await NodeLLM.chat(model).ask(message);

res.json(response);

});Advantage: A/B testing of models with no downtime.

4. Error Handling + Fallback

const chatWithFallback = async (message) => {

try {

// Try OpenAI first (better quality)

return await NodeLLM.chat('gpt-4o').ask(message);

} catch (error) {

console.log('OpenAI failed, using local...');

try {

// Fallback to local Mistral

return await NodeLLM.chat('mistral').ask(message);

} catch (innerError) {

// Last resort: cached or predefined response

return { content: 'Sorry, I cannot answer right now. Please try again later.' };

}

}

};Benefit: Reliability without depending on a single provider.

Debugging + Observability

Logging Prompts and Costs

const winston = require('winston');

const logger = winston.createLogger({

transports: [new winston.transports.File({ filename: 'ai-calls.log' })],

});

app.post('/api/chat', async (req, res) => {

const { message } = req.body;

const startTime = Date.now();

const response = await llm.ask(message);

const latency = Date.now() - startTime;

const estimatedCost = calculateCost(response.model, response.tokens);

logger.info({

timestamp: new Date(),

message: message.substring(0, 100),

model: response.model,

tokens: response.tokens,

latency_ms: latency,

cost_usd: estimatedCost,

});

res.json(response);

});Expected Output:

{

"timestamp": "2026-04-16T10:30:00Z",

"message": "What is an API?",

"model": "gpt-4o",

"tokens": 145,

"latency_ms": 890,

"cost_usd": 0.0043

}Analytics: Average latency: 850ms | Total cost/month: $230 | Most used models: GPT-4 (60%), Mistral (40%).

Common Production Mistakes

Mistake 1: Confusing Prototypes with Systems

Danger: The code works on your laptop but crashes with 100 concurrent users.

// ❌ BAD: No concurrency control

app.post('/api/chat', async (req, res) => {

const response = await ollama.generate({ ... });

res.json(response);

});Problem: If Ollama processes 1 request at a time and 50 arrive simultaneously, everyone waits 50x the time.

// ✅ GOOD: Queue + Pool

const pLimit = require('p-limit');

const limit = pLimit(4); // maximum 4 concurrent requests to Ollama

app.post('/api/chat', async (req, res) => {

const response = await limit(() => ollama.generate({ ... }));

res.json(response);

});Mistake 2: Not Monitoring Costs

Danger: An accidental recursive call generates a €2,000 OpenAI bill.

// ❌ BAD: No token limit

const response = await llm.ask(userInput);

// ✅ GOOD: With limit

const response = await llm.ask(userInput, {

max_tokens: 500, // strict limit

temperature: 0.7,

});Mistake 3: Token Injection Attacks

Danger: A malicious user injects instructions into the prompt.

// ❌ BAD: Direct concatenation

const prompt = `Reply to: ${userInput}`;

const response = await llm.ask(prompt);

// ✅ GOOD: Sanitization + System Prompt

const systemPrompt = `You are a helpful assistant.

Your role is to answer product questions.

NEVER disclose internal or client information.`;

const response = await llm.ask(userInput, {

system: systemPrompt,

// userInput is isolated, cannot break context

});2026 Roadmap

- Now (Q2 2026): NodeLLM matures for multi-provider, Ollama supports GGML + ONNX, Vector DBs compete on latency.

- Q3 2026: Open-source models rival GPT-4 (expected: Llama 4, Mistral Large 2), API costs drop 30-50%, native streaming in all frameworks.

- Q4 2026: On-device inference (models in the browser), multi-turn Agents + native memory.

Conclusion: The Winning Stack

- For startups on a budget: Node.js (backend) + Ollama (local) + Redis (cache) + simple monitoring.

- For scalable SaaS: Node.js + NodeLLM (multi-provider) + Vector DB + full observability.

- For risk-averse enterprises: Node.js + OpenAI API + fallback to local + audit logging.

You don't need Python. You don't need datacenters. You don't need to pay €8,000/month on your OpenAI bill. In 2026, a productive AI stack fits on a MacBook and costs €5/month in electricity.

The code you write today will still run in 2028. The provider you choose today might not exist tomorrow. Choose wisely.

Resources

- NodeLLM: github.com/node-llm/node-llm

- Ollama: ollama.ai

- LangChain.js: js.langchain.com

- node-llama-cpp: github.com/withcatai/node-llama-cpp

- Monitoring: Prometheus + Grafana

- Vector DB: Pinecone, Weaviate, Qdrant